Using a modern monitor with an Amiga 1200 over VGA (posted 2020-09-16)

After talking about the complexities involved with connecting older computers to newer displays, I was in the mood for a success story.

(Update: see new info at the bottom of the page.)

This is how I finally figured out how to get a decent looking picture from my Amiga 1200 on a Dell U2312HM monitor using a simple Amiga-to-VGA adapter (or cable).

This is the adapter I'm talking about. It has the Amiga 23-pin video connector on one end and a standard VGA connector at the other end. There's no electronics inside, it really only allows for connecting a VGA cable to the Amiga; it's up to the Amiga to generate a video signal that the monitor will understand.

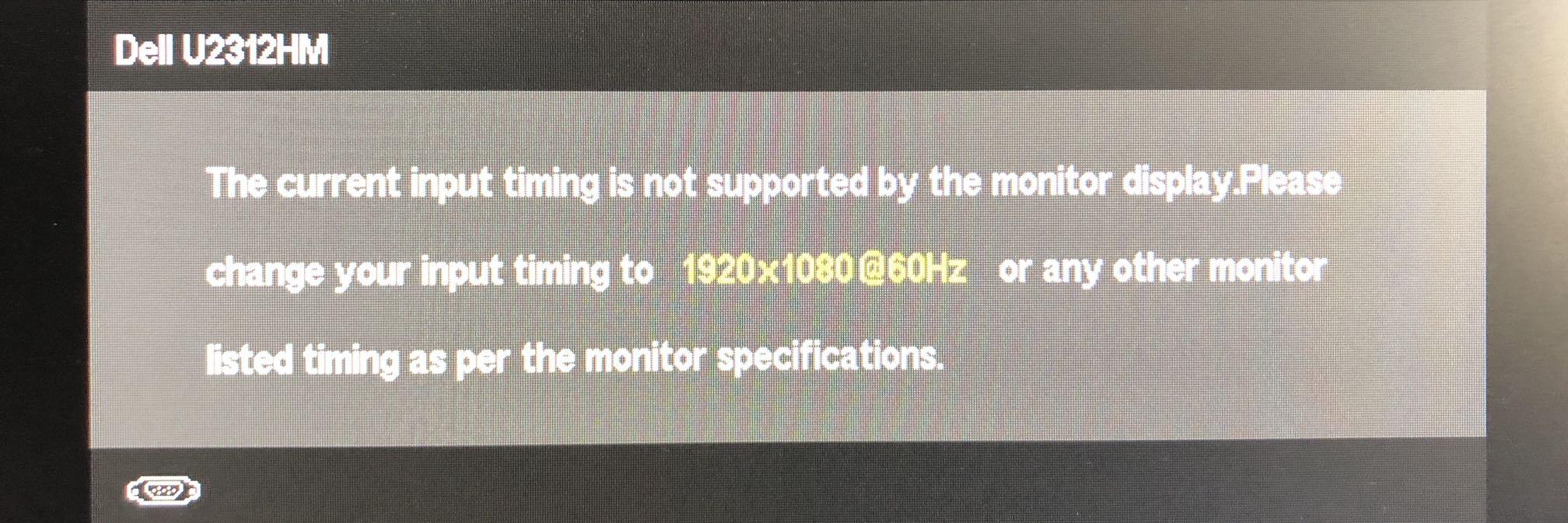

The monitor in question is a 23" (58 cm) LCD monitor with 1920x1080 resolution from 2011. It has VGA, HDMI and DisplayPort inputs. The first thing I tried was the DblPAL monitor driver. No dice.

For some reason, when Commodore came up with the AGA chipset that can handle the frequencies needed for driving a VGA monitor, they actually used timing that significantly deviates from what a VGA monitor expects with their double PAL and NTSC (DblPAL and DblNTSC) and Multiscan Productivity screenmodes. However, they realized this and included an additional pseudo monitor driver VGAonly that, when active/available in the Devs/Monitors drawer, will adjust the timing of the other resolutions. So with VGAonly installed I at least got an image.

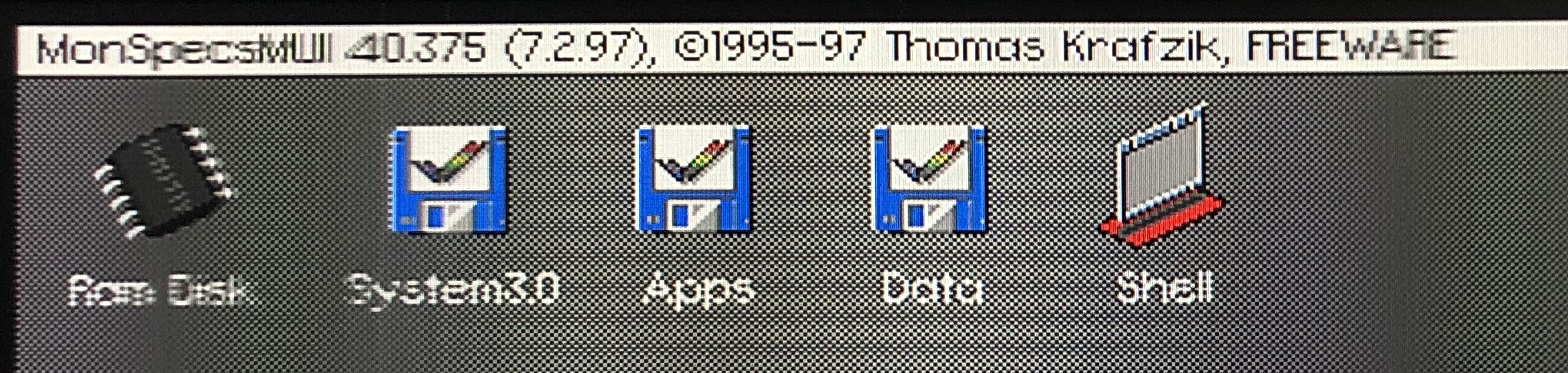

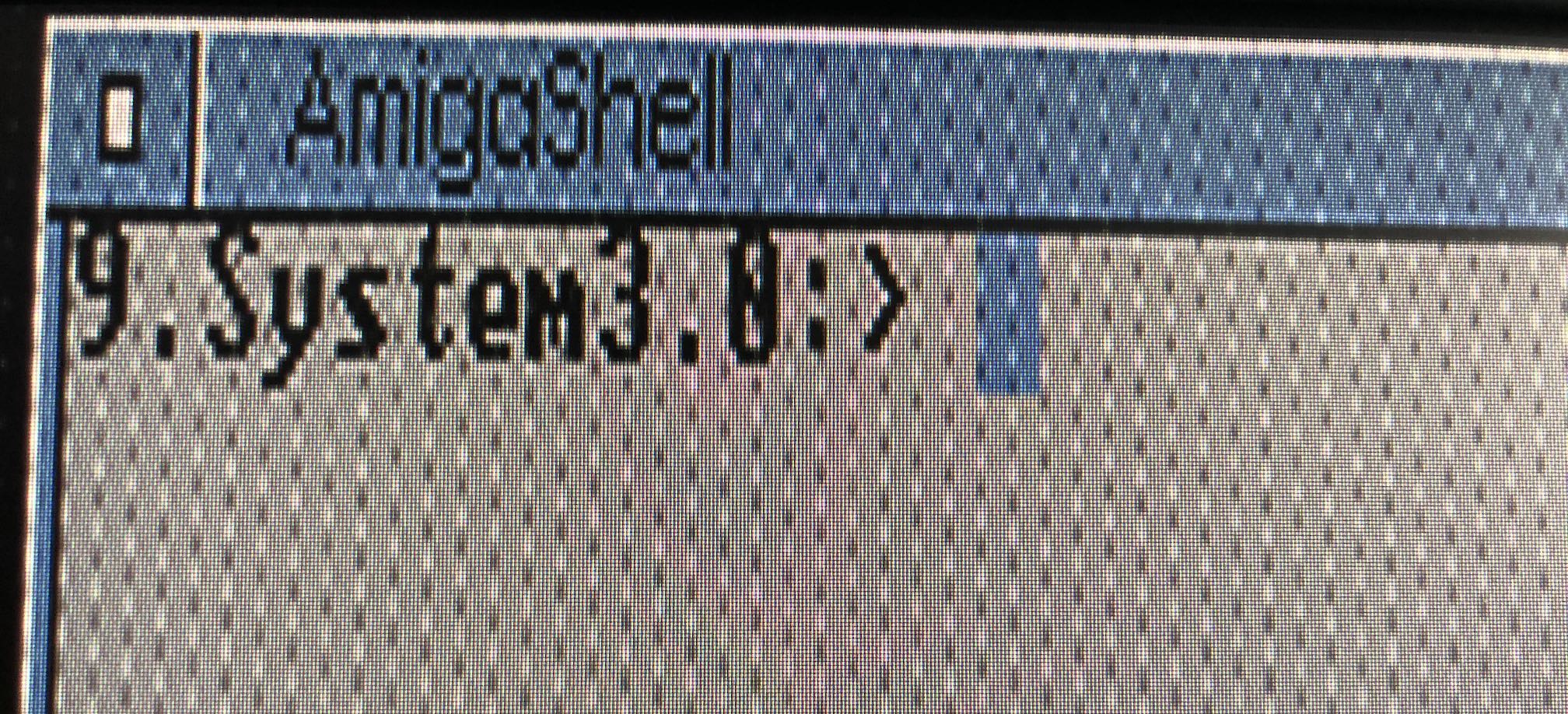

But it looks pretty bad. Note that I used a 1x1 pixel checkerboard background to be able to see what's going on more easily. As you can see, the text in the menu bar gets really blurry every few characters, but there's sharp characters, too. There's sharp and blurry bands.

Normally, an LCD monitor using VGA input will auto adjust to the input signal to make sure that every pixel in the original image lands on the screen as a well-defined pixel. It's actually quite amazing how well this can work if you hook up a computer that provides a 1920x1080 image to this monitor.

However, for some reason the auto adjust to the Amiga's signal doesn't work. Manually adjusting the clock and phase settings doesn't really help, either. However, with a program like MonSpecsMUI or MonEd you can adjust the timing of the currently active screenmode. Unfortunately, all of this is not really documented, so you need to adjust the settings until you get something useful. In my experience, the TotClk value is the most important one. If you adjust that one, you'll see the sharp and fuzzy bands get narrower or wider.

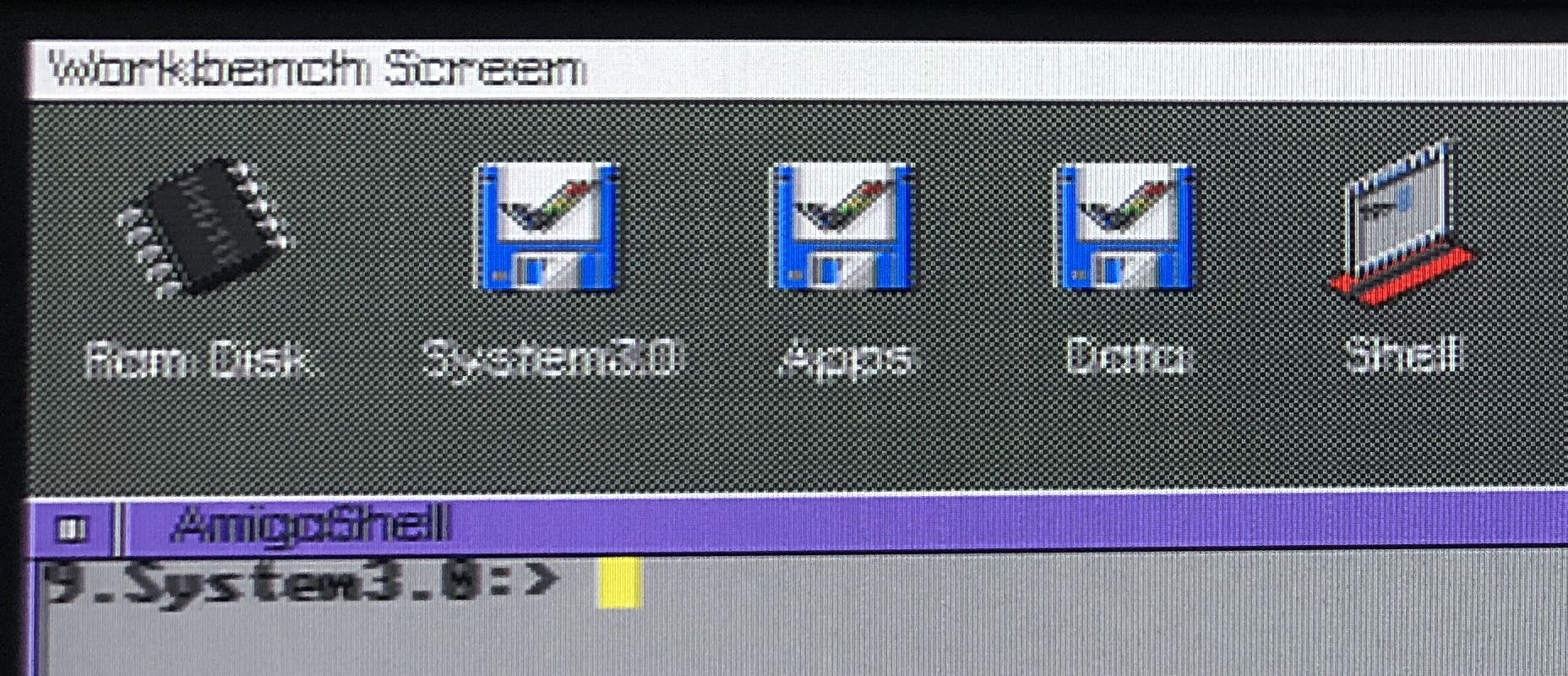

Wider is good. After that, at some point the bands disappear and the entire image is equally sharp or fuzzy. If you adjust the TotClk a lot, you may also want to adjust the TotRow to keep the vertical scanning frequency to something around 60 Hz (i.e., between around 50 and 70). The horizontal scanning frequency is best kept between 30 and 33 kHz or so, although sometimes you may get good results outside that range.

As far as I know, it's not dangerous to feed these signals to an LCD monitor, although back in the day there were always warnings about such experimentation. I assume this mostly applies to CRTs that don't have any logic on board to reject out-of-spec signals, like modern monitors have, starting with CRTs from the late 1990s or early 2000s.

If the image sharpness is not completely consistent from left to right, at this point it helps to adjust the display clock on the monitor. Note that the image may still look pretty bad.

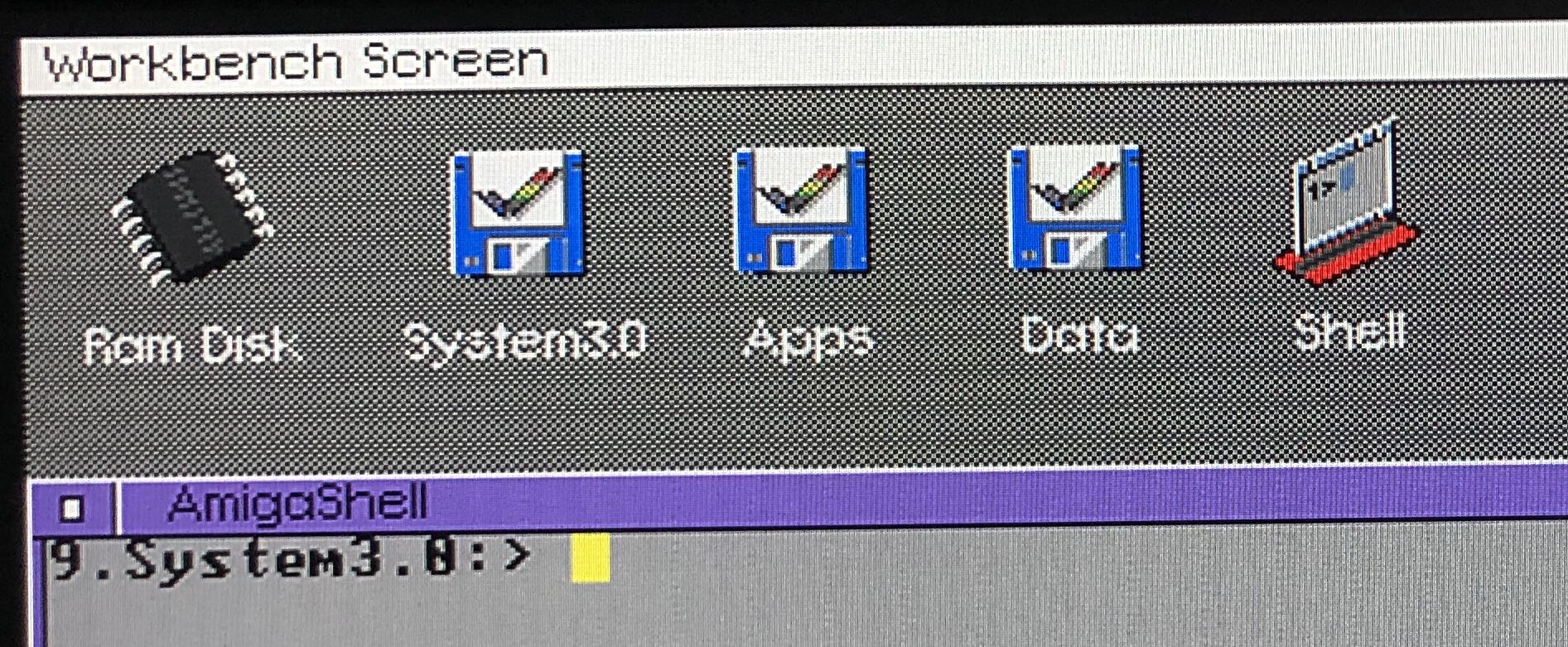

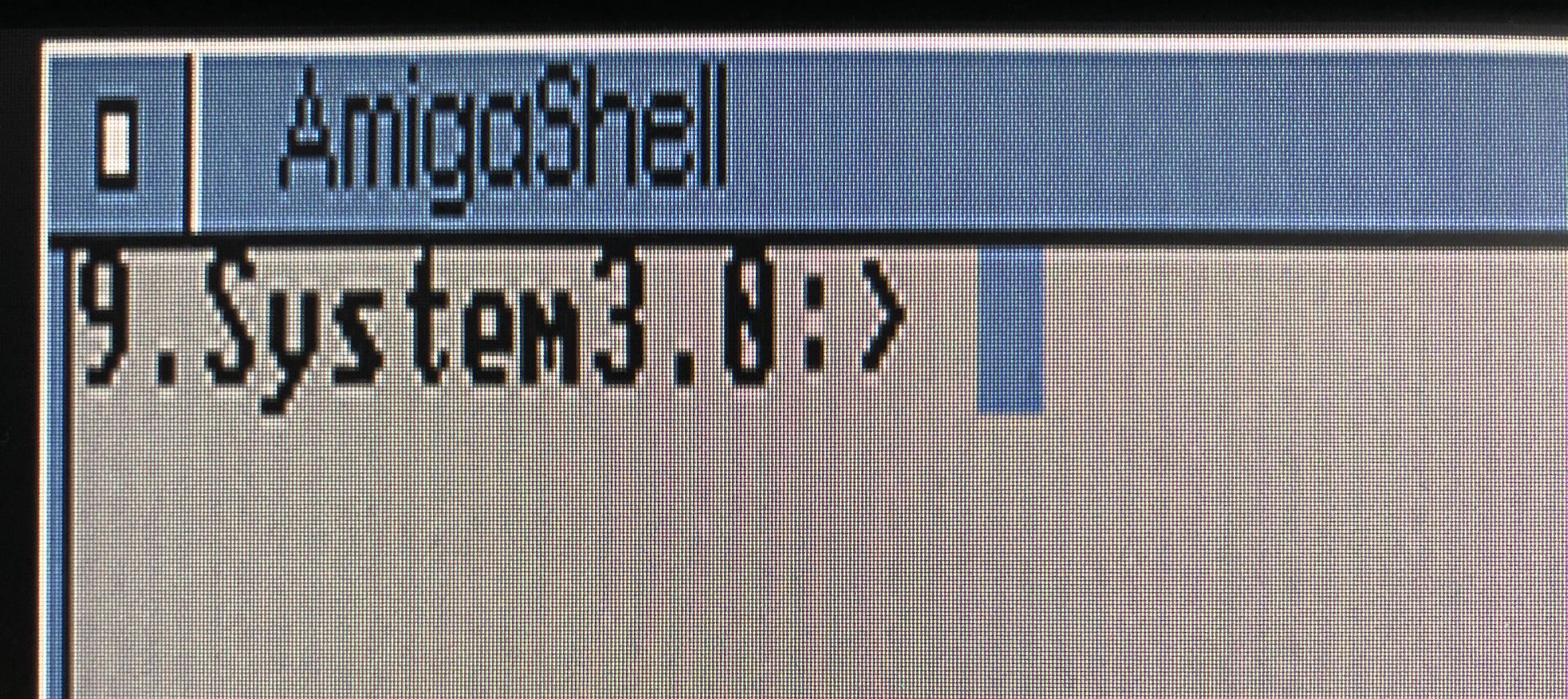

We fix that by adjusting the phase. My Amiga has always shown a little banding, but by adjusting the phase on the monitor, it's possible to get rid of that for the most part, and end up with a very acceptable image. (The white letters look a bit weird because of the checkerboard background.)

Success! At this point, we can run productivity software in our chosen screenmode, and that will look pretty good. However, games and some other old software open screens at lower resolutions that aren't VGA-compatible. Fortunately, now that monitors and TVs typically use the same resolutions, many monitors can actually handle PAL and NTSC timing. But... in my case, this looked pretty horrible.

Unfortunately, adjusting the video timing with MonSpecsMUI doesn't work for the old PAL and NTSC resolutions. However, adjusting the clock and phase on the monitor was actually enough to get a very usable image, especially after lowering the sharpness a bit.

There's still a bit of noise in the image, but it's certainly not bad. There's probably some high frequency interference ending up in the video signal that could be cleaned up with the right low pass filter.

On this monitor, PAL and NTSC interlace resolutions flicker as bad as on the worst CRT. These resolutions are completely unusable. But hey, what else is new.

Conclusions?

Is this the ideal way to display an Amiga's video output? No. The image is pretty good, but not as clean as it could be. I would also like more control over the size and position of the image. But there's really no perfect monitor for an Amiga. Back in the day we used multisync CRTs, but those are harder and harder to get and won't last forever. Then again, VGA ports are quickly going away, too, and compatibility between an Amiga and a random LCD monitor with VGA in is a big gamble. If you got one that works, keep it and turn the monitor and the computer on a few times a year, this way they last longer.

A graphics card is also nice on Amigas that have card slots (such as the Amiga 2000, 3000 and 4000), and they use standard VGA timing so they pretty much work with any monitor with a VGA input. And they'll give you higher resolutions and more colors. But those graphics cards won't give you the Amiga's original PAL and NTSC resolutions that you need for the old stuff.

Last but not least, there's various converters. More about that later. But again, no magic bullet. The Amiga is just too weird, flexible and old to make all of its tricks work on the much more streamlined monitors that we have today.

Update 22 april 2024:

A common issue with Amigas and VGA are those vertical stripes that are known as "jail bars". These are caused by high frequency interference. It’s possible to get rid of them using this open source hardware project: AMI-RGB2VGAULTIMATE. Or, you can buy a finished buffered VGA adapter with VLR (vertical line remover), also available here. Please note: I have not checked to what degree these products work as advertised.